December 19, 2019 report

Robot experiment shows people trust robots more when robots explain what they are doing

A team of researchers from the University of California Los Angeles and the California Institute of Technology has found via experimentation that humans tend to trust robots more when they communicate what they are doing. In their paper published in the journal Science Advances, the group describes programming a robot to report what it was doing in different ways and then showed it in action to volunteers.

As robots become more advanced, they are expected to become more common—we may wind up interacting with one or more of them on a daily basis. Such a scenario makes some people uneasy—the thought of interacting or working with a machine that not only carries out specific assignments, but does so in seemingly intelligent ways might seem a little off-putting. Scientists have suggested one way to reduce the anxiety people experience when working with robots is to have the robots explain what they are doing.

In this new effort, the researchers noted that most work being done with robots is focused on getting a task done—little is being done to promote harmony between robots and humans. To find out if having a robot simply explain what it is doing might reduce anxiety in humans, the researchers taught a robot to unscrew a medicine cap—and to explain what it was doing as it carried out its task in three different ways.

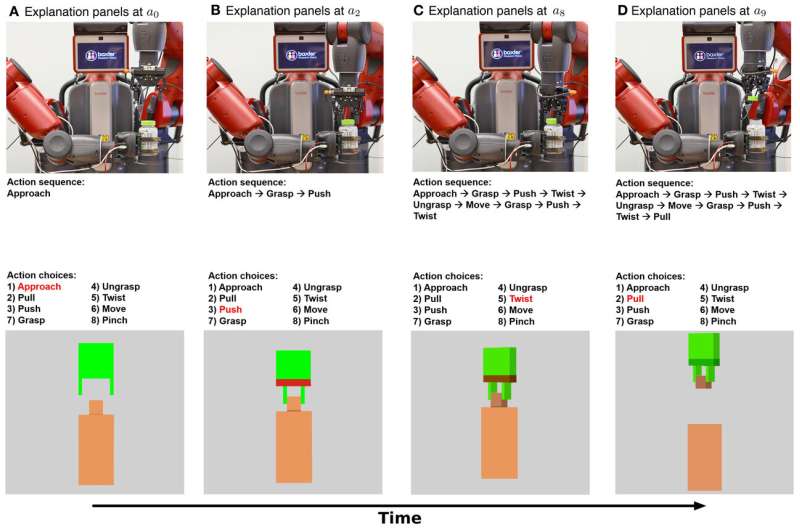

The first type of explanation was called symbolic, or mechanistic, and it involved having the robot display on a video screen each of the actions it was performing as part of a string of actions, e.g. grasp, push or twist. The second type of explanation was called haptic, or functional. It involved displaying the general function that was being performed as a robot went about each step in a task, such as approaching, pushing, twisting or pulling. Volunteers who watched the robot in action were also shown a simple text message that described what the robot was going to do.

The researchers then asked 150 volunteers to watch as the robot opened a medicine bottle along with accompanying explanations. The researchers report that the volunteers gave the highest trust ratings when they were shown both the haptic and symbolic explanations. The lowest ratings came from those who saw just the text message. The researchers suggest their experiment showed that humans are more likely to trust a robot if they are given enough information about what the robot is doing. They say the next step is to teach robots to report why they are performing an action.

More information: Mark Edmonds et al. A tale of two explanations: Enhancing human trust by explaining robot behavior, Science Robotics (2019). DOI: 10.1126/scirobotics.aay4663

© 2019 Science X Network