August 29, 2018 feature

Baidu researchers develop a new auto-tuning framework for autonomous vehicles

Researchers at Chinese multinational tech company Baidu have recently developed a data-driven auto-tuning framework for self-driving vehicles based on the Apollo autonomous driving platform. The framework, presented in a paper pre-published on arXiv, consists of a new reinforcement learning algorithm and an offline training strategy, as well as an automatic method of collecting and labelling data.

A motion planner for autonomous driving is a system designed to generate a safe and comfortable trajectory to reach a desired destination. Designing and tuning these systems to ensure that they perform well under different driving conditions is a difficult task that several companies and researchers worldwide are currently trying to tackle.

"Motion planning for autonomous driving cars has a lot of challenging issues," Fan Haoyang, one of the researchers who carried out the study, told Tech Xplore. "One main challenge is that it has to deal with thousands of difference scenarios. Typically, we define a reward/cost functional tuning that can adapt those differences in scenarios. However, we find it is a difficult task."

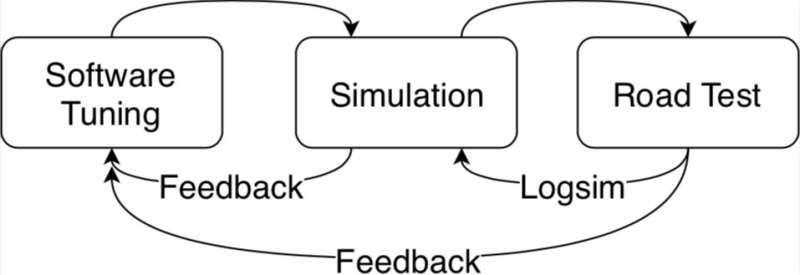

Typically, reward-cost functional tuning requires extensive work on behalf of researchers, as well as resources and time spent on both simulations and road tests. In addition, the environment can change dramatically over time and as driving conditions become more complicated, tuning the performance of the motion planner becomes increasingly difficult.

"To systematically solve this issue, we developed a data-driven auto-tuning framework based on the Apollo autonomous driving framework," Fan said. "The idea of auto-tuning is to learn parameters from human demonstrated driving data. For example, we would like to understand from data how human drivers balance speed and driving convenience with obstacle distances. But in more complicated scenarios, for example, a crowded city, what can we learn from human drivers?"

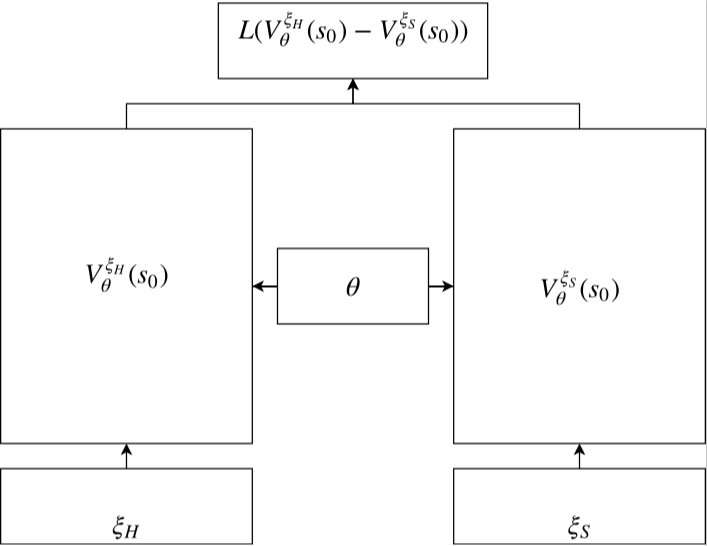

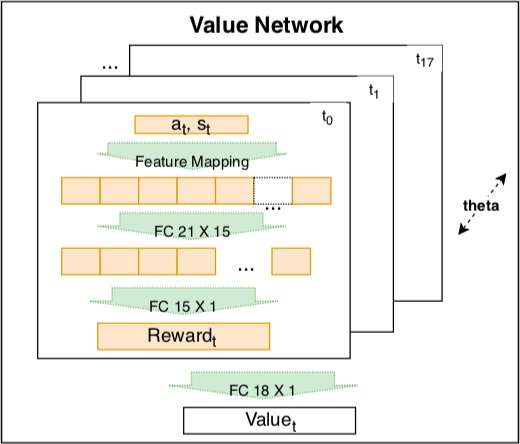

The auto-tuning framework developed at Baidu includes a new reinforcement learning algorithm, which can learn from data and improve its performance over time. Compared to most inverse reinforcement learning algorithms, it can be effectively applied to different driving scenarios.

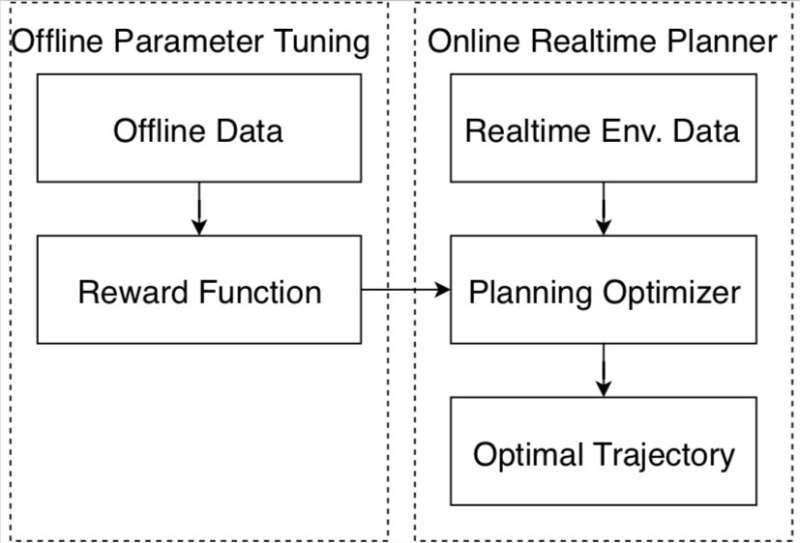

The framework also includes an offline training strategy, offering a safe way for researchers to adjust parameters before an autonomous vehicle is tested on public roads. It also collects data from expert drivers and information about the environment, automatically labeling these so they can be analyzed by the reinforcement learning algorithm.

"I think we developed a safe pipeline to make a machine learning scalable system by using human demonstration data," Fan said. "The open loop human demo data are collected and do not need extra labeling. Since the training process is also offline, our method is suitable for autonomous driving motion planning, keeping public road test safety."

The researchers evaluated a motion planner tuned using their framework both on simulations and public road testing. Compared to existing approaches, their data-driven method was better able to adapt to different driving scenarios, performing consistently well under a variety of conditions.

"Our research is based on Baidu Apollo Open Source Autonomous Driving platform," Fan said. "We hope more and more people from academia and industry can contribute to the autonomous driving ecosystem through Apollo. In future, we plan to improve the current framework of Baidu Apollo to a machine learning scalable system that can systematically improve the scenario coverage of autonomous driving cars."

More information: An Auto-tuning Framework for Autonomous Vehicles. arXiv: 1808.04913v1 [cs.RO]. arxiv.org/abs/1808.04913

Abstract

Many autonomous driving motion planners generate trajectories by optimizing a reward/cost functional. Designing and tuning a high-performance reward/cost functional for Level-4 autonomous driving vehicles with exposure to different driving conditions is challenging. Traditionally, reward/cost functional tuning involves substantial human effort and time spent on both simulations and road tests. As the scenario becomes more complicated, tuning to improve the motion planner performance becomes increasingly difficult. To systematically solve this issue, we develop a data-driven auto-tuning framework based on the Apollo autonomous driving framework. The framework includes a novel rank-based conditional inverse reinforcement learning algorithm, an offline training strategy and an automatic method of collecting and labeling data. Our auto-tuning framework has the following advantages that make it suitable for tuning an autonomous driving motion planner. First, compared to that of most inverse reinforcement learning algorithms, our algorithm training is efficient and capable of being applied to different scenarios. Second, the offline training strategy offers a safe way to adjust the parameters before public road testing. Third, the expert driving data and information about the surrounding environment are collected and automatically labeled, which considerably reduces the manual effort. Finally, the motion planner tuned by the framework is examined via both simulation and public road testing and is shown to achieve good performance.

© 2018 Tech Xplore