December 7, 2017 weblog

When AI is made by AI, results are impressive

(Tech Xplore)—Researchers exploring AI systems are making news and familiarizing the public with terms like reinforcement learning and machine learning. Recent headlines are still making some heads turn in surprise. AI software is "learning" how to replicate itself and to build its own AI child.

As such, Google's AI created its child AI using reinforcement learning, entirely automated.

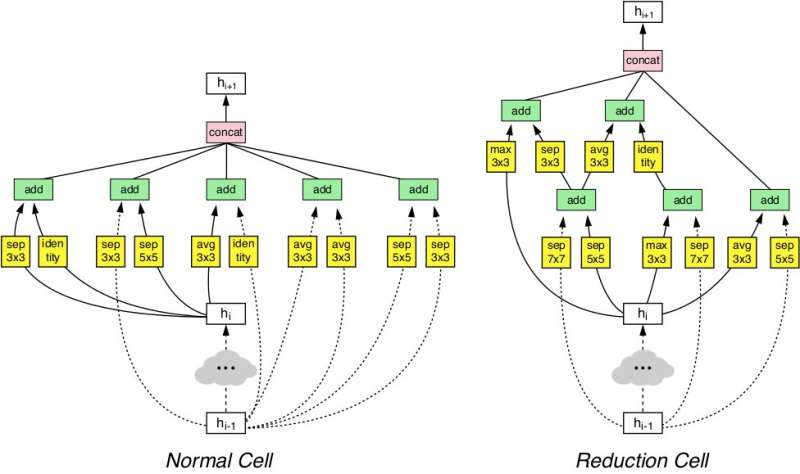

Meet 'NASNet.' Google researchers refer to it as a novel architecture.

Vaughn Highfield in Alphr noted this child AI is capable of a specific task – image recognition. The child AI was tasked with recognizing objects in a video, real time.

Futurism expanded on what took place. "AutoML acts as a controller neural network that develops a child AI network for a specific task. For this particular child AI, which the researchers called NASNet, the task was recognizing objects—people, cars, traffic lights, handbags, backpacks, etc.—in a video in real-time."

The Google Brain had introduced AutoML project earlier this year. Dom Galeon and Kristin Hopuser wrote, "AutoML would evaluate NASNet's performance and use that information to improve its child AI, repeating the process thousands of times."

How that worked: Highfield called the process "endless tweaking." Karla Lant in Futurism commented: "Much of metalearning is about imitating human neural networks and trying to feed more and more data through those networks. This isn't—to use an old saw—rocket science. Rather, it's a lot of plug and chug work that machines are actually well-suited to do once they've been trained."

(An upside is that "if human engineers are spending less time on the grunt work involved in creating the systems, they'll have more time to devote to oversight and refinement.")

Results: NASNet outperformed all other systems when tested on what the blog authors called "two of the most respected large-scale academic data sets in computer vision."

NASNet was 82.7% accurate at predicting images on ImageNet's validation set.

Barret Zoph, Vijay Vasudevan, Jonathon Shlens and Quoc Le, wrote about the team's achievement, headlined "AutoML for large scale image classification and object detection." They reported that "On ImageNet image classification, NASNet achieves a prediction accuracy of 82.7% on the validation set, surpassing all previous Inception models that we built."

Alphr's crosshead asked, Is this the beginning of the end for humanity? One can forgive the high drama as author Highfield put the Google work in perspective. "Obviously, in its current guise, NASNet isn't going to be the downfall of humanity. It is, however, the key to how we build better AI systems in the future."

Highfield in Alphr said, "With self-learning AI and AI's that can also moderate and alter other AIs, we could create AI that is better for autonomous vehicles or automated factories."

The blog authors said they have open-sourced NASNet for inference on image classification and for object detection in the Slim and Object Detection TensorFlow repositories. "We hope that the larger machine learning community will be able to build on these models to address multitudes of computer vision problems we have not yet imagined."

AutoML is creating "more powerful, efficient systems than human engineers can," said Lant in Futurism.

More information: AutoML for large scale image classification and object detection, research.googleblog.com/2017/1 … rge-scale-image.html

© 2017 Tech Xplore